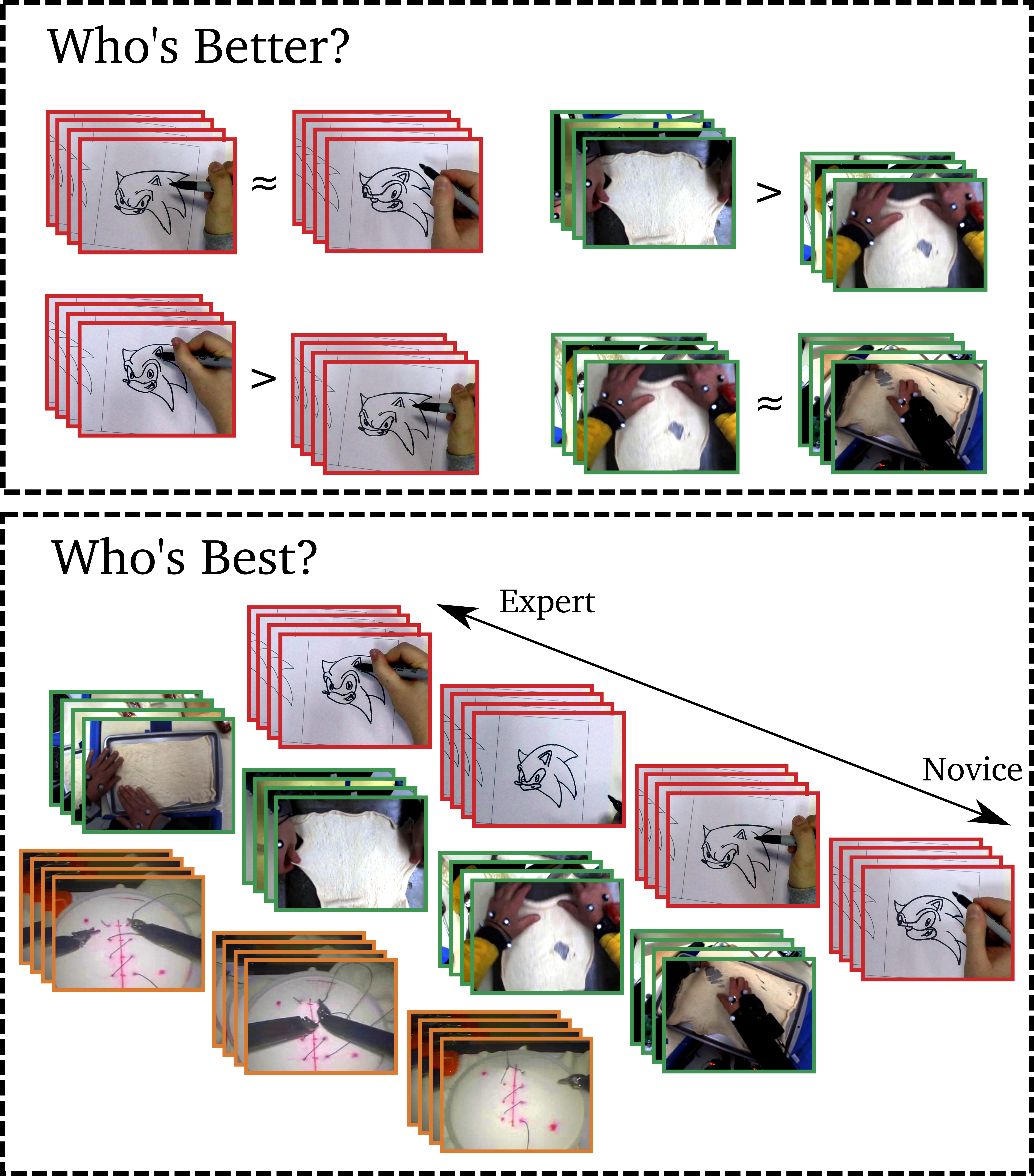

In CVPR2018, we presented a method for assessing skill of performance from video, applicable to a variety of tasks, ranging from surgery to drawing

and rolling pizza dough. We formulate the problem as pairwise (

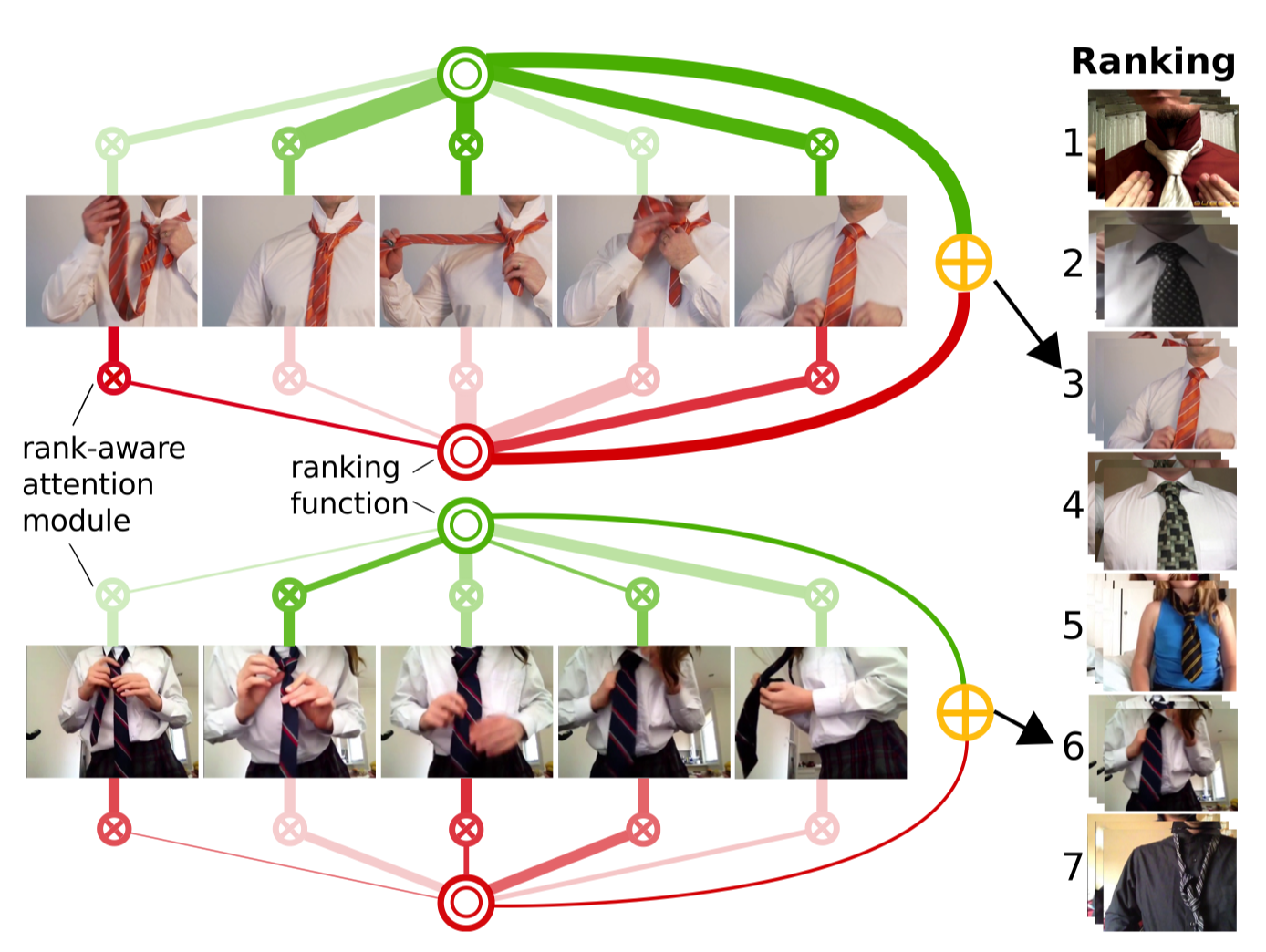

In Dec 2018, we present a new model to determine relative skill from long videos, through learnable temporal attention modules. We propose to train rank-specific temporal attention modules, learned with only video-level supervision, using a novel rank-aware loss function. In addition to attending to task-relevant video parts, our proposed loss jointly trains two attention modules to separately attend to video parts which are indicative of higher (pros) and lower (cons) skills.